TL;DR

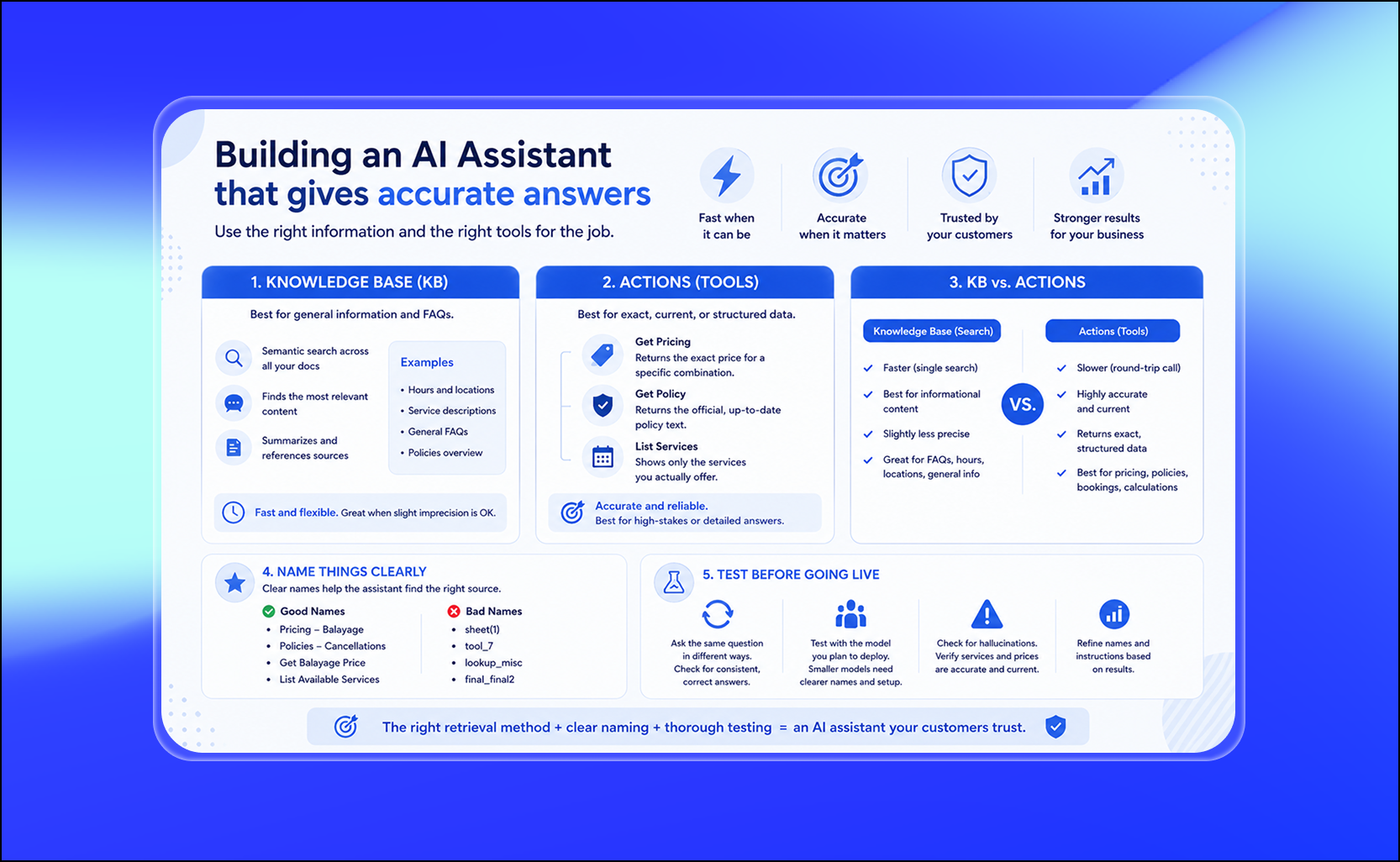

- If you're building an AI assistant for your business, whether for customer support, bookings, or answering FAQs one of the most important decisions you'll make is how the assistant finds and retrieves information.

- Get this wrong and your assistant will give vague answers, hallucinate prices, or confuse customers. Get it right and it becomes one of the most reliable tools in your business.

- This guide breaks down the two core retrieval methods, knowledge base search and actions, when to use each, why naming your documents and tools correctly is critical, and how to test your assistant before going live.

What Is an AI Assistant Knowledge Base and how does it work?

A knowledge base is a collection of documents, text, and structured content that you load into your AI assistant. This can include your website url, service descriptions, pricing sheets, cancellation policies, FAQs, location and hours information, and anything else you want the assistant to reference when answering questions.

When a user asks a question, the assistant performs a broad semantic search across everything in the knowledge base. Semantic search means the assistant doesn't just look for exact keyword matches, it understands the meaning behind the question and finds the most relevant chunks of information, even if the wording is different.

After finding the relevant content, the assistant summarizes the answer and can quote or link back to the source document.

This is the foundation of most FAQ-style AI assistants, and for good reason, it is fast, flexible, and requires no custom development to set up.

When a Knowledge Base is enough: The FAQ Assistant

For a general FAQ assistant, loading everything into the knowledge base is the right move. Website url, services list, pricing overview, policies, hours, locations, dump it all in.

The assistant's job in this case is:

- Perform a broad semantic search across all available documents

- Identify the most relevant answer

- Summarize it clearly for the user

- Reference or link the source, if you instruct this under the instructions.

This works well because FAQ questions are informational and forgiving. A customer asking "do you offer highlights?" or "what are your hours on Sunday?" does not need a mathematically precise answer. They need a helpful, accurate summary, and the knowledge base handles that well.

The knowledge base approach is also the fastest retrieval method available to an AI assistant. Because it is a single semantic search rather than a round-trip call to an external system, responses come back quickly. Speed matters in conversational AI, users expect near-instant replies.

If your assistant is primarily answering informational questions, a well-organized knowledge base is all you need to get started.

When a Knowledge Base is not enough: Pricing, booking, and edge Cases

Here is where many teams make a critical mistake. They assume that because the knowledge base works for FAQs, it will also work for pricing questions, booking rules, and policy edge cases. It won't, at least not reliably.

When a customer asks "how much would a balayage cost for long, thick hair with toner and a blowout?", the assistant needs to find a very specific answer. If your pricing lives inside a paragraph of website copy, mixed in with marketing language and general descriptions, the assistant has to guess. And when AI assistants guess on pricing, they get it wrong in ways that erode trust and create real business problems.

The solution is to add actions, also called tools, that allow the assistant to retrieve information in a deterministic and systematic way.

An action is essentially a direct lookup, a function the assistant can call that returns a precise, structured answer. Instead of searching broadly and summarizing, the assistant goes straight to the right source and returns the exact data, as an example, to a Google Sheets action.

Examples of actions that solve common accuracy problems:

Get Pricing (hairLength, thickness, hairType, toner, treatment, blowout), returns the exact price for a specific combination of services, eliminating any ambiguity or outdated information from old KB documents.

Get Policy (policyName), returns the canonical, current version of a specific policy such as cancellations, refunds, or late arrivals, without the assistant having to interpret or paraphrase.

List Services, returns the official list of services the business currently offers. This is especially important because AI assistants could sometimes confidently describe services that do not exist, a problem known as hallucination, this may depend on the AI model as well. Grounding the assistant in a live services list prevents this.

With actions in place, the assistant stops being a system that searches and guesses. It becomes a system that looks up and answers. That is a fundamental shift in reliability.

Knowledge Base vs. Actions: Speed and Accuracy trade-offs

A question that comes up frequently when designing AI assistants is whether to use the knowledge base or actions for a particular type of question.

Here is how to think about the trade-off:

- Knowledge base retrieval is faster. It is a single semantic search that happens entirely within the assistant's context. No external API call, no round trip to a database. This makes it ideal for informational questions where response speed matters and the stakes of a slightly imprecise answer are low. You can instruct to your Assistant: "Only answer questions with the Knowledge information" and your AI Assistant will be accurate.

- Actions are slower but more accurate. Every action involves a round-trip call, the assistant decides to invoke the action, sends the request, waits for the response, and then formulates the answer. This adds latency. But the answer that comes back is precise, current, and authoritative.

The practical rule: use the knowledge base for informational content, and use actions for anything that must be exact. Pricing, eligibility, current availability, formulas, calculation, booking rules, and policy specifics should all go through actions. Do not add actions just to add them, only where accuracy genuinely requires it.

When a user asks a pricing question and only a knowledge base is available, here is what happens behind the scenes: the assistant searches all available documents simultaneously, finds pricing mentioned in the FAQ, the services page, maybe an old PDF, and a promotional page, and then picks what seems most relevant. It might get the right number. It might get an outdated one. It might average across two conflicting sources. None of that is acceptable for pricing.

With a properly named pricing action, the assistant calls one function, gets one answer, and returns it with confidence.

Why naming your documents and Actions is critical for AI Assistant performance

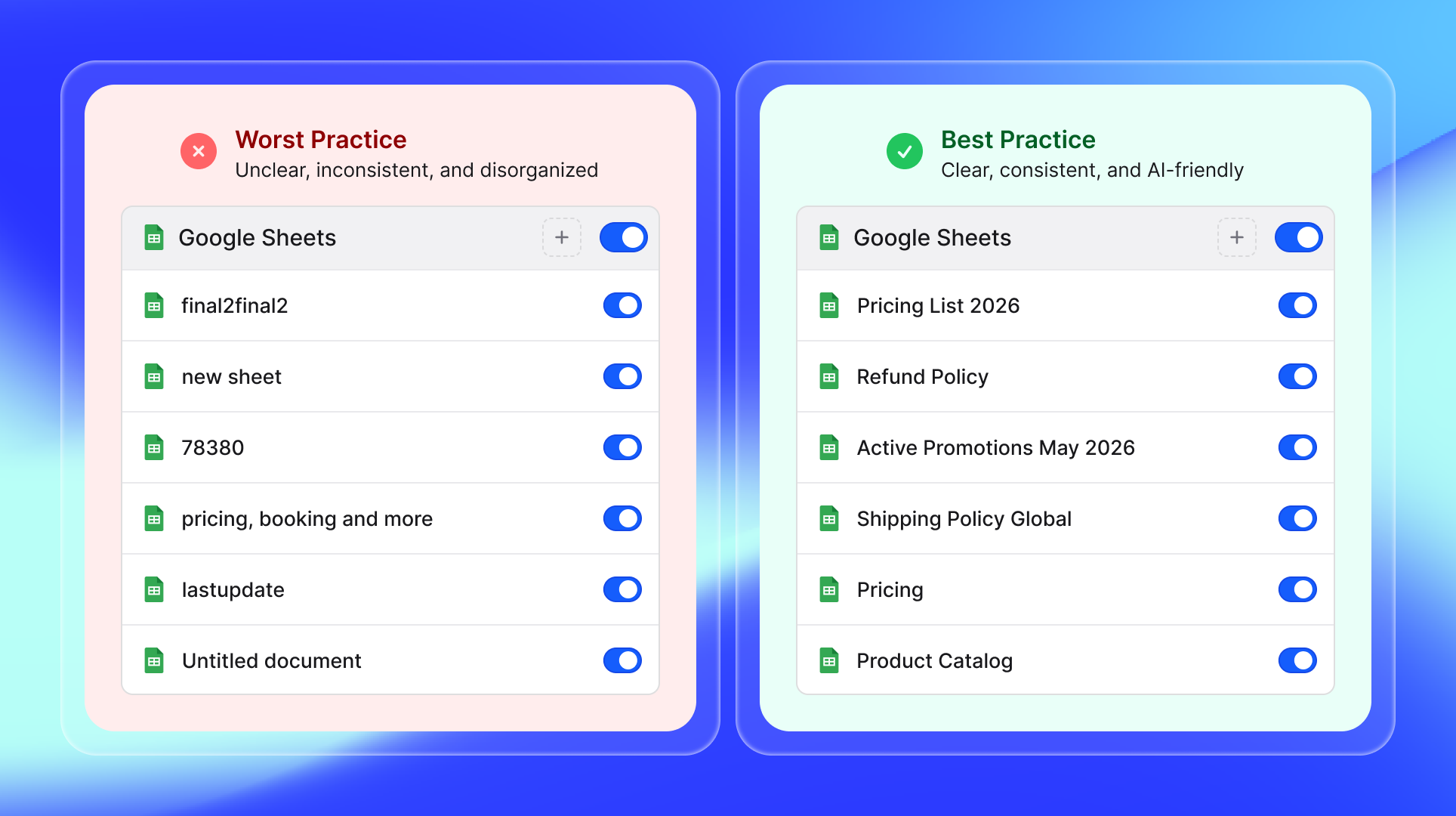

Clear, descriptive action names matter: avoid cryptic, generic titles in your integrations and documentation. Choose specific, meaningful names like “Pricing list” or “Refund Policy” to ensure clarity for your team and users.

This is one of the most overlooked factors in AI assistant design, and it directly affects how well your assistant performs, especially with lighter or smaller models.

The assistant uses the names of your documents and actions as signals for which source is authoritative. When a user asks a pricing question, the assistant scans the names of all available resources and decides where to look. If your pricing document is called "Pricing: Balayage Services" it will go there first. If it is called "sheet(1)" or "Code837720" or "final_final2," the assistant has no idea what is in there and may skip it entirely or rank it incorrectly.

Good document names for a service business AI assistant:

- Pricing - Balayage

- Pricing - Color Services

- Pricing - Add-Ons

- Policies - Cancellations and No-Shows

- Policies - Refunds

- Services - Full Service Menu

- FAQ - General

- FAQ - Booking and Appointments

Good action names for an AI assistant:

- Get Balayage Price

- Get Cancellation Policy

- Get AddOn Prices

- List Available Services

- Get Booking Rules

Bad names that cause assistant errors:

- run_query_12

- tool_7

- lookup_misc

- doc_v3_final

- new pricing (copy)

- untitled

The naming principle extends to how you describe actions in your assistant configuration. A clear description: "Returns the exact price for balayage services based on hair length, thickness, and selected treatments" helps the assistant decide when to invoke it. A vague description like "gets some pricing info" gives it almost nothing to work with.

Consistent, human-readable naming is not just an organizational preference. It is a functional requirement for a well-performing AI assistant.

This doesn't means you need one document for each thing, there are some things that can be grouped

The simple decision framework: KB Doc or Action?

Use this as a starting point when deciding how to structure your assistant's information:

- If the question is informational as hours, location, general service descriptions, brand story, general FAQs, a knowledge base document is the right tool. Load it in, name it clearly, and the assistant will handle it.

- If the question requires exact, current, or structured data, as pricing with multiple variables, booking eligibility, refund calculations, policy specifics with edge cases, build an action. Name it clearly, document what it returns, and test it thoroughly.

- If the question could lead the assistant to describe something that doesn't exist, like a service you used to offer or a promotion that ended add a List Services or List Current Promotions action or document on the Knowledge that grounds the assistant in what is actually available today.

How to test your AI Assistant before going live

The difference between a well-designed AI assistant and a frustrating one often comes down to testing. Here is a practical approach:

Ask the same pricing or policy question ten times in different ways. Rephrase it, abbreviate it, ask it casually and formally. Check whether the assistant consistently finds the right answer or whether it drifts across sessions. Inconsistency is a sign of a naming problem or a missing action.

Test with the models you are actually considering deploying. Stronger models like Claude Sonnet handle ambiguous routing better, they are more likely to know when to call an action versus search the knowledge base, even with imperfect naming. Lighter or faster models are more sensitive to naming quality. A badly named action that a strong model routes to correctly may be completely ignored by a smaller model.

This is why testing across models matters. The same assistant configuration can behave meaningfully differently depending on which underlying model is powering it. If you are optimizing for cost by using a lighter model, you need to compensate with better naming, clearer action descriptions, and tighter knowledge base organization.

Check specifically for hallucinated services or prices. Ask the assistant about things you do not offer and see how it responds. If it confidently describes a service you no longer offer, you have three options: add a List Services action that returns only what is currently available, add a knowledge base document that explicitly defines your current service menu, or if you have crawled your website, audit it and remove any outdated pages or content before the assistant picks them up. You can always re-index it with one-click through the Knowledge.

To build a reliable AI assistant, use the right tool for the job: lean on your knowledge base for quick info and actions for accurate, up-to-date data. Clear naming and strong testing practices ensure your assistant delivers answers users can trust.

Building an AI Assistant that gives accurate answers

Designing an AI assistant that customers trust comes down to three decisions made well.

- Choose the right retrieval method for each type of information. Use knowledge base search for broad informational questions where speed matters and slight imprecision is acceptable. Use actions for pricing, policies, booking rules, and anything that must be exact.

- Name everything clearly and consistently. Your documents and actions are the map the assistant uses to navigate your information. Descriptive, human-readable names are not optional, they directly determine whether the assistant finds the right answer.

- Test with the model you plan to deploy, not just the most powerful one available. Different models handle ambiguous retrieval differently, and performance gaps become visible under realistic testing conditions.

An AI assistant built this way, with a clean knowledge base, well-scoped actions, and thoughtful naming, does not just answer questions. It becomes a reliable extension of your business that customers trust and return to.

Ready to start?

The best way to learn is to build. Load your content, ask your ten most common customer questions, and see what happens. You will know within an hour whether you are on the right track.

Ready to try it? Invent Business gives you a full 14-day free trial and a permanent free plan with 100 messages per month.

Start building your assistant today and see what it can do for your customers.

FAQs

1. What platforms offer easy tools to train an AI chatbot without coding?

The main categories are AI-trained platforms and visual flow builders. AI-trained platforms like Invent, Chatbase, SiteGPT, and CustomGPT simply require uploading content or providing URLs. Visual flow builders like Landbot, Voiceflow, and Tars use drag-and-drop interfaces. For document-heavy knowledge bases, Invent, Chatbase and CustomGPT focus on training AI assistants using proprietary documents, websites, and internal knowledge bases.

2. Best platforms for developing custom AI chatbots

It depends on your use case:

- Invent: Best for founders, small teams and enterprises from any vertical that needs WhatsApp, SMS, Gmail, Instagram, Messenger and SMS, with unified inbox.

- Botpress: Best for teams that need multi-channel deployment and may want to add code later.

- Voiceflow: Best for building scalable support agents; StubHub International built and launched a powerful AI customer support agent in 90 days using Voiceflow, empowering non-technical teams Voiceflow.

- CustomGPT: Best for businesses that need agents trained on domain-specific data integrated with existing knowledge bases.

- ManyChat / Chatfuel: Best for WhatsApp, Instagram, and social media automation.

3. What are the key components of an effective AI chatbot training strategy?

Based on what the platforms converge on, the key components are:

- Organized, well-named content: Upload documents, PDFs, URLs, and FAQs with clear, descriptive names so the assistant knows where to look.

- Actions for accuracy: Add structured documents and files for pricing, policies, and anything that must be exact.

- Testing and iteration: AI output will always vary. Test by asking the same question in different ways and compare answers for consistency.

- Analytics review: Platforms like Invent provide detailed insights into conversation performance, CSAT and more.

- Model selection: Accuracy varies significantly between models.

4. What are the pricing models for chatbot training platforms?

There are three common pricing structures:

- Subscription tiers: Free to ~$500/mo (e.g., free ≈100 messages + 1 bot; higher tiers up to ~40k messages + analytics).

- Per-channel pricing: Different prices per channel (e.g., Facebook/IG vs. WhatsApp).

- Usage/credits: You pay by messages/credits; higher-end models can cost many more credits per reply, so spend can vary.

- Enterprise/custom: Typically starts around ~$300+/mo with custom contracts for high volume and added security/support.

5. Free trials for AI chatbot building platforms

Most major platforms offer some form of free access to test before committing. Here is what the landscape looks like:

Platforms with free tiers or trials:

- Invent: free plan with 100 message credits per month with full feature access and 14-day free trial for the business subscription

- Chatbase: free plan with 100 message credits per month

- Botpress: free tier with usage-based charges

- Voiceflow: free tier available

- ManyChat: free plan for basic automation

- Hyperleap: free tier

- Quidget: free setup with limited monthly conversations

- SiteGPT: 7-day free trial with full feature access