TL;DR

If you've ever deployed an AI chatbot and found your actual costs higher than the estimate, you already know something important: AI usage is more dynamic than any calculator can fully capture upfront. That's not a problem, it's just the nature of real conversations. Understanding why costs vary is the first step to actually controlling them.

"The most expensive thing in AI isn't the model. It's the tokens you didn't realize you were sending."

G.H.

1. What estimators get right (and their limits)

Cost calculators ask: daily messages and AI model. They multiply a fixed cost-per-message against volume.

Example:

100 messages/day × 30 days × $0.0025/message ≈ $7.50/month

This is a smart baseline, and a great way to compare models or estimate ROI before going live. What it can't predict upfront is how your real conversations will behave: how long they run, what features are active, or whether you'll have traffic spikes. That's not a calculator flaw. It's simply the difference between an estimate and a live environment.

2. How context drives costs

AI doesn't just read your latest message. It reads everything, every time.

Each response includes:

- System prompt (instructions)

- Knowledge base / FAQ content

- Full conversation history

- New user message

This context window compounds fast. Message 1 costs little. Message 30 costs 30–50x more as history reruns entirely.

Real example: One reply used 22,696 input tokens (vs. 564 output). The estimate assumed ~500 input. Reality: 45x higher.

Mental model: Adding one page to a document, but reprinting the whole document each time.

3. Five key cost drivers

- Conversation history, sent every time. 30-message chats cost 100x+ single exchanges.

- System prompts aka Instructions, always included. 3,000 bloated tokens vs. 300 lean = 10x difference per call.

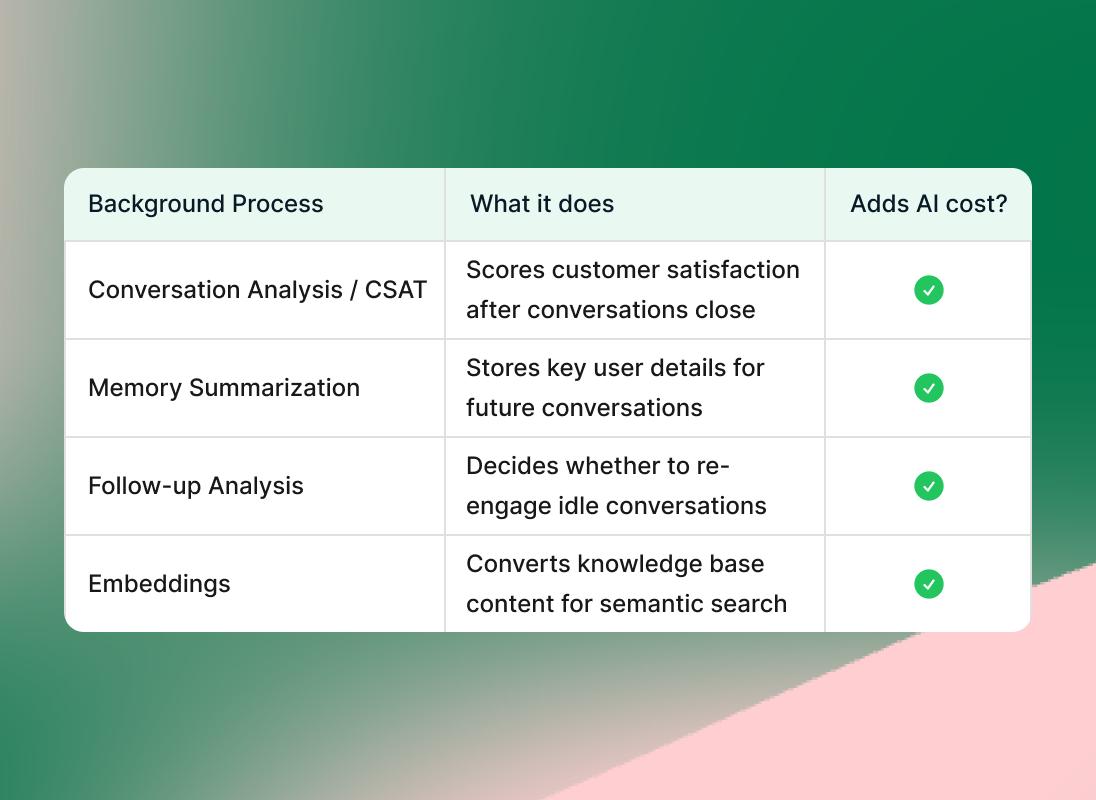

- Background processes, CSAT, memory summarization, follow-ups, embeddings. Often 3–5 AI calls per message.

- Media Messages, voice notes, PDFs, images consume thousands of tokens each.

- Traffic spikes, viral campaigns create 10x volume days the estimate couldn't foresee.

Background processes add up: Modern AI assistant platforms run multiple behind-the-scenes tasks, like conversation analysis, follow-up, and memory summarization, that each contribute to your AI costs.

4. Context engineering principles

Cheaper models help. But context engineering, deliberately shaping what goes into the context window, delivers the biggest wins. Input tokens dominate costs, and input is yours to control.

Pillar 1: Lean system prompts sent every call, forever.

- Define role in 2–3 sentences (not 20)

- Use bullets, not paragraphs

- Cut duplicates ("always be polite" once is enough)

- Drop rare edge cases

Target: <500 tokens simple; <1,500 complex

Pillar 2: Smart Knowledge Retrieval (RAG)

Dumping full FAQs into every call is the naive approach. RAG retrieves only the relevant sections for each specific question.

How does this looks:

- The user asks a question

- The system searches the FAQ (or knowledge base) for the most relevant pieces

- Only those specific, relevant sections are sent to the AI

- The AI answers using just what it needs

This is an example on how you can place the instructions:

[INSTRUCTIONS]

You are a helpful condo assistant. Use the info below to answer.

Relevant knowledge:

- Pool hours: Monday–Sunday, 8:00 AM – 10:00 PM.

- Pool closes during holidays and maintenance days.

Resident question: "What are the pool hours?"

Pillar 3: Conversation history management

- Sliding window: Last 8–10 messages only

- Summarization: Compress old history into key facts

- Selective memory: Keep only meaningful context

- Session reset: Fresh start after resolution

5. Your action checklist

- Audit system prompt, cut it in half. Test quality. You'll usually be surprised.

- Retrieve, don't inject. Use semantic search for relevant knowledge only.

- Cap history, last 8–10 turns is almost always enough.

- Disable unused features. Turn off CSAT/memory if you're not acting on the data.

- Match model to task. Cheap/fast for Q&A; premium only for reasoning.

- Design for fewer turns. Quick replies and structured flows reduce turns and cost.

- Gate media, enable voice/image/document processing only when needed.

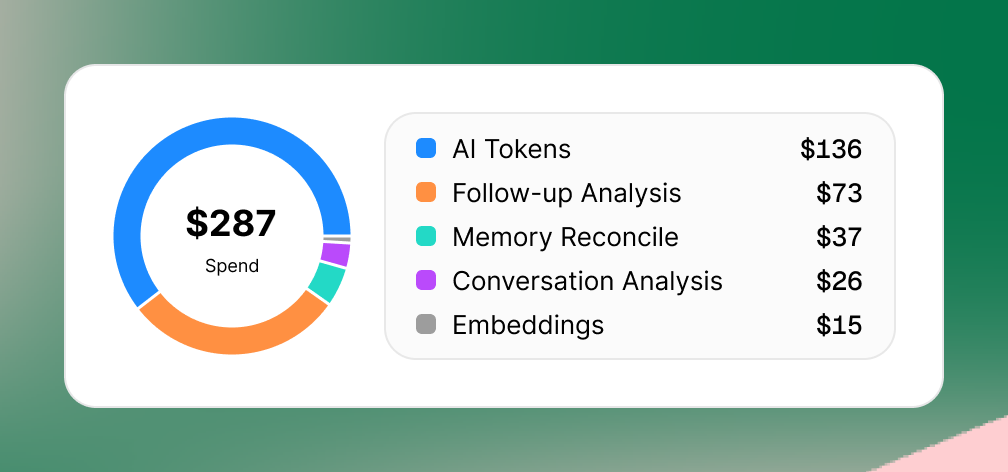

- Monitor by event , Track tokens vs. background processes vs. media weekly.

Dashboard widget showing a $287 AI spend visualized by a colored donut chart. A legend itemizes AI cost categories: AI Tokens ($136, blue), Follow-up Analysis ($73, orange), Memory Reconcile ($37, teal), Conversation Analysis ($26, purple), and Embeddings ($15, gray), on a green and pink gradient background.

FAQs

1. How do I reduce token usage in my AI chatbot without hurting response quality?

Matching the right AI model to each task delivers the biggest wins. Premium models excel at complex reasoning, multi-step analysis, or sensitive conversations, but faster, cheaper models handle straightforward Q&A just as well. This single change often cuts costs 3x immediately.

2. What is context engineering for AI chatbots and why does it matter?

Context engineering means intentionally controlling what enters the AI's context window on every message: system prompt + knowledge base + conversation history. These three elements drive 90%+ of input token costs, which you fully control. Trimming prompts and capping history delivers 5x–20x savings by design choices anyone can implement today.

3. How much can context engineering reduce AI chatbot costs?

Teams applying context engineering, leaner system prompts, RAG-based knowledge retrieval, conversation history caps, routinely achieve 5x–20x cost reductions without changing AI models or sacrificing answer quality. System prompts and history management compound savings across every single message, making this the highest-leverage optimization for agencies and builders.

4. Should I disable CSAT scoring and memory features to save AI costs?

Only disable background AI processes you're not actively using.

5. What’s the fastest way to cut AI chatbot token costs right now?

Audit and trim your system prompt. This single text gets sent on every AI call, forever across all conversations. Cut verbose instructions, remove duplicates, use bullets not paragraphs, test the shorter version. You'll see savings within hours, often with better clarity.

6. Will AI chatbot costs get cheaper automatically as models improve?

Yes, but understanding token mechanics gives you an enduring edge. Models grow more efficient yearly, platforms add automatic context optimization, and pricing drops steadily. Builders who master context engineering + model selection will always outpace those relying solely on vendor improvements, regardless of platform.

The new mental model

Estimates give direction based on averages, and that's genuinely useful. Real conversations run longer, richer, with background features active. Once you understand the drivers: context size, background processes, traffic spikes, you have real levers to pull. Context engineering alone can cut costs 5x–20x, no model changes needed.

"The most expensive thing in AI isn't the model. It's the tokens you didn't realize you were sending."

Agencies and builders who master this build leaner systems, explain costs confidently to clients, and scale predictably.

Start building smarter, try Invent free today.