TL;DR

Most AI failures are context problems.

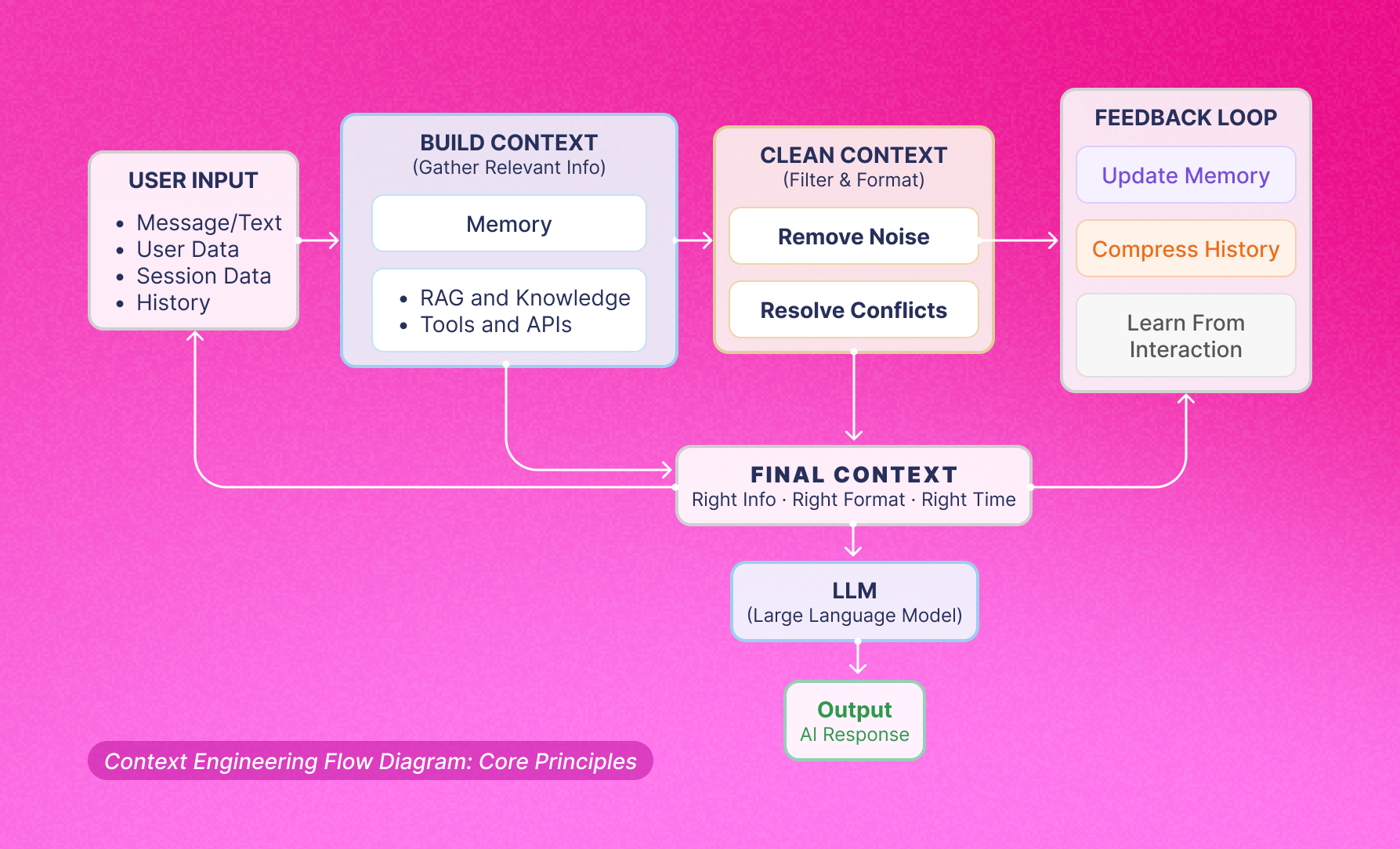

Context engineering is the next evolution of AI system design: the discipline of shaping what the model knows and sees throughout an interaction. It’s how you turn static prompts into intelligent workflows that adapt to users, tasks, and tools in real time.

Bigger context windows aren’t the answer, better context pipelines are.

1. It's not just "Better Prompting"

Most people treat context engineering as fancy prompt engineering. It's not.

Prompt engineering = crafting a single input string

Context engineering = designing an entire system that dynamically constructs what the model sees, before, during, and across interactions

Context engineering is what prompt engineering becomes when you go from:

Experimenting → Deploying

One person → An entire team

One chat → A live business system

It includes: memory, retrieval (RAG), tools, conversation history, instructions, user state, and more.

The prompt is just one piece of a much bigger engineered pipeline.

As Phil Schmid (Hugging Face) places it, Context Engineering is:

Context Engineering is the discipline of designing and building dynamic systems that provides the right information and tools, in the right format, at the right time, to give a LLM everything it needs to accomplish a task.

2. Context is dynamic, not static

Many still treat context as a fixed block of text.

- In reality, context must be built dynamically:

- Tailored to each individual request.

- Shaped by user state, time, task, retrieved data, and prior conversation turns.

- Static context leads to brittle systems that fail in real-world usage.

3. Context can be poisoned, distracted, or conflicted

Builders often focus only on adding context, ignoring how it can harm performance.

- Common failure modes:

- Context poisoning: bad or misleading information.

- Context distraction: excessive info that buries key signals.

- Context clash: conflicting instructions (from system prompt, retrieved docs, or user input).

4. It’s the #1 reason agents fail

Model errors are often caused by bad context, not weak model reasoning.

- Examples include:

- Missing essential information.

- Overwhelming noise hiding key data.

- Lack of memory of past steps or decisions.

- Poorly formatted tool outputs entering the prompt.

5. Context Engineering is a systems design problem

- Often mistaken for a writing skill, but really about architecture and orchestration.

- Key systemic questions:

- What gets stored in memory?

- What is retrieved, and when?

- How is conversation history compressed over time?

- How are tool outputs normalized before entering context?

- Especially critical for multi-agent and conversational systems (e.g., your WhatsApp assistant for 30 condominiums).

6. Bigger context windows ≠ Better results

Large token windows (e.g., 1M+) tempt users to “just dump everything.”

- Issues:

- Models struggle with “lost in the middle” effect.

- Higher cost and latency from huge context payloads.

- Quality and relevance outperform raw size.

7. Context is the bridge between AI and real-world knowledge

- Context engineering enables grounding, making AI aware of:

- Business logic and rules.

- User and session history.

- Organizational documents and procedures.

- Real-time task or system state.

Without context, even top models behave like “brilliant people with amnesia.”

8. It will matter even more as models improve

There is a misconception that smarter models make context less important.

- Reality:

- Advanced models depend more on rich, structured context.

- Performance bottleneck shifts from model power → context quality.

- The higher the ceiling, the higher the need for precision context.

The bottom line

- Context Engineering = the art and science of giving AI the right information, at the right time, in the right format.

The real innovators think at the system level, designing intelligent pipelines that make AI:

- Informed

- Situationally aware

- Contextually sharp

How context engineering works: User input is transformed through memory, knowledge, and conflict resolution into clean, relevant context, then used by LLMs for precise responses and continuous learning.

FAQs

1. How to implement Retrieval Augmented Generation for improved context

Retrieval Augmented Generation (RAG) is a technique that combines a retrieval system with a generative language model to produce more accurate, grounded, and context-aware responses.

To implement RAG for improved context:

- Build a knowledge base: Collect and structure your domain-specific documents, FAQs, manuals, or databases into a vector store (e.g., Pinecone, Weaviate, or pgvector).

- Organize and index your content: Break your documents into smaller, digestible pieces and teach the AI to understand their meaning — not just their keywords. This allows the system to recognize relevant information even when users phrase their questions differently.

- Enable smart search: When a user asks something, the system automatically scans your content library behind the scenes and surfaces the most relevant pieces of information to inform the response, much like a knowledgeable assistant who knows exactly which page of a manual to look at.

- Inject context into the prompt: The retrieved chunks are inserted into the LLM's prompt as context, allowing the model to generate responses grounded in your specific data.

- Get accurate, context-aware answers: The AI combines the information retrieved from your content with its own broad knowledge to generate a response that is specific, relevant, and helpful. As an end user, you can improve the quality of answers by asking clear and specific questions, providing relevant details about your situation, and giving feedback when a response misses the mark, every correction helps the system learn what matters most to you.

- Keep improving over time: A great AI assistant is never truly "finished." By regularly reviewing how users interact with the system, what questions get answered well, where it falls short, and what feedback users provide, teams can continuously fine-tune and improve the experience.

RAG is especially powerful for customer support bots, internal knowledge assistants, and any application where up-to-date or proprietary information is critical.

2. How does context engineering enhance customer experience in digital services?

Context engineering dramatically improves customer experience by ensuring that AI systems understand not just what a user is asking, but who they are, where they are in their journey, and what they actually need. Here's how it makes a difference:

- Personalization at scale: By feeding relevant user history, preferences, and behavioral signals into the AI's context window, responses feel tailored rather than generic.

- Reduced friction: Customers don't need to repeat themselves. A well-engineered context carries prior interactions, account details, and session state seamlessly across touchpoints.

- Faster issue resolution: Agents and chatbots armed with rich context can diagnose problems and propose solutions in fewer turns, reducing handle time and frustration.

- Proactive assistance: Context-aware systems can anticipate needs, for example, surfacing a billing FAQ when a user navigates to the payments section, before the customer even asks. If you want to dive more into practical examples you can visit Amit Eyal Govrin blog post from Kubiya.ai

- Consistent omnichannel experience: Whether a customer reaches out via chat, email, or voice, context engineering ensures continuity and coherence across every channel.

- Higher trust and satisfaction: When AI responses are relevant, accurate, and timely, customers feel heard and valued, directly boosting CSAT and NPS scores.

In digital services, the difference between a frustrating and a delightful experience often comes down to how well context is captured, maintained, and utilized.

3. What is context engineering in artificial intelligence?

Context engineering is the discipline of deliberately designing, structuring, and managing the information provided to an AI model, particularly a large language model (LLM), to maximize the quality, relevance, and accuracy of its outputs.

Context engineering is what prompt engineering becomes when you go from:

Experimenting → Deploying

One person → An entire team

One chat → A live business system

4. How can I implement context engineering in chatbot development?

- Define your context layers: Identify what types of context your chatbot needs, user profile data, conversation history, business rules, real-time information, and retrieved knowledge.

- Design a system prompt architecture: Craft a robust system prompt that sets the chatbot's role, tone, constraints, and key instructions. This is the foundation of your context.

- Implement conversational memory: Use short-term memory to maintain the flow of a single conversation and long-term memory (stored in a database) to personalize future interactions based on past sessions.

- Integrate dynamic data retrieval (RAG): Connect your chatbot to internal knowledge bases, product catalogs, or documentation so it can pull in accurate, real-time context on demand.

- Apply context compression and summarization: As conversations grow long, summarize earlier turns to preserve the context window for the most relevant recent information.

- Use structured context injection: Format user metadata, session variables, and retrieved content in a consistent, parseable way so the model can easily extract and use it.

- Test for context failures: Identify edge cases where missing or conflicting context leads to poor responses, and build fallback mechanisms.

Monitor and iterate: Log what context was provided for each interaction and correlate it with user satisfaction signals to refine your context strategy over time.

Effective context engineering turns a generic chatbot into a genuinely intelligent assistant, one that feels aware, responsive, and trustworthy to every user it serves.

Final thoughts

If you’re building AI assistants, stop thinking like a prompt writer and start thinking like a context engineer.

Your system doesn’t need more tokens, it needs smarter context.

Start designing context engineering that makes your AI Assistant actually understand your world.