TL;DR

At Invent, we power AI-driven auto follow-ups on WhatsApp to engage clients after hours, on weekends, and during holidays. When clients are unavailable, our AI identifies the optimal moment to re-engage, keeping conversations moving and deals closing without manual intervention.

But operating AI at this level of autonomy raises a critical question: how do we actually know it's working as intended?

That's where AI observability comes in, and it's fundamentally different from what most teams expect.

AI observability = the ability to trace, replay, and evaluate every AI decision in production, from prompt and tool use to handoffs and outcomes.

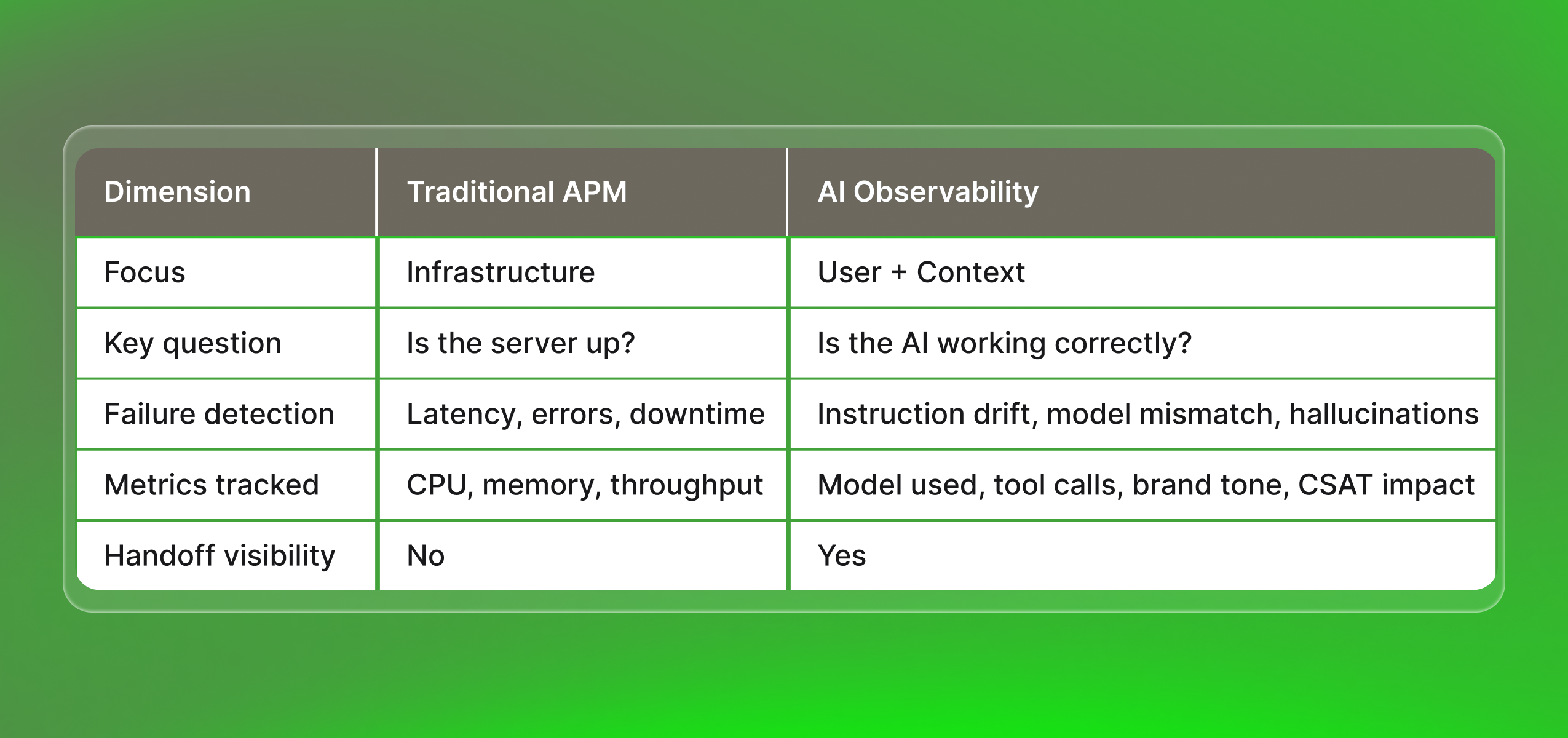

Why traditional APM isn't enough for AI

Traditional Application Performance Monitoring (APM) tracks infrastructure health: latency, errors, throughput, and resource usage across services and databases. It tells us if the system is running.

AI observability asks a deeper set of questions:

- Is the assistant following its system instructions?

- Is it maintaining brand tone across WhatsApp, web, SMS, and email?

- Is it using tools (Stripe, Odoo, CRM, calendar, search) correctly?

- Is it staying aligned with what the user is actually trying to accomplish?

It's inherently user- and context-centric. We care whether the AI:

- Routed a lead properly

- Resolved a support ticket

- Respected memory and privacy rules

- Coordinated a smooth handoff to a human

All of this can fail silently, even when every infrastructure metric looks green.

In multi-model, agentic setups (GPT, Claude, Gemini, Grok + live tools), observability must also capture:

- Which model was selected

- Which tools executed

- How those choices affected cost, quality, and CSAT

From infrastructure to intelligence: See how AI Observability redefines monitoring, focusing on user context, model behavior, and real-world outcomes all the way to handoff.

The most common ways AI systems fail

The most frequent failure we encounter isn't hallucination or downtime, it's model-task mismatch. Teams without broad cross-model experience often default to familiar options, and the results can be subtle but costly.

Grok 4.1 Leaked internal reasoning

Grok 4.1 surfaced its internal reasoning steps directly to end users. This wasn't a hallucination, it was a behavioral mismatch between the model's defaults and the product's requirements. Without observability, that failure hides in plain sight.

Gemini Flash 2.5 hallucinates on knowledge gaps

Gemini Flash 2.5 tends to hallucinate when needed information isn't in its knowledge base (instructions or system prompt). When context is missing, the model fills the gap. The fix isn't always switching models, it's enriching the knowledge architecture.

Hallucinations could be from a lack of knowledge or a model problem.

Choosing the right model size

- Small models (Nano, Lite and Mini versions): Efficient for FAQ-style tasks without escalation.

- Large models (Opus, Sonnet, Gemini Pro and Flash series, GPT series): Required for complex, multi-step reasoning.

Observability tells us over time whether model calibration is actually holding.

The real test: Can you replay a failed AI journey?

When evaluating observability platforms for LLMs, RAG pipelines, or agent-based systems, we use one benchmark:

Can we fully replay a failed AI journey?

Practical example: On a RAG chatbot backed by your website and Stripe, a failed payment journey should be reconstructable end-to-end:

- Exact user messages

- Which pages were retrieved

- Which Stripe API calls fired

- How the model interpreted the error

- How the human handoff unfolded in the inbox

If your tooling can't provide that, you have logs, not observability.

At Invent, we built observability per channel and extended it across every integration point. Having replayability and context continuity across the full AI-assisted journey is crucial.

What happens when you fly blind

We've seen the pattern repeat across client environments: fragmented tools, limited visibility, black-box AI behavior. In every case, failures were measurable, and preventable.

The most damaging scenario? Poor visibility into AI-to-human handovers. When no one can see exactly where the AI stopped and a human should have engaged:

- Transitions become clunky

- Tickets get dropped

- CSAT scores fall

The journey breaks, but because no single tool captures the full picture, diagnosis never happens.

That's not a technical failure. It's an observability failure.

UX and product development must be integrated. Observability makes that real.

Production readiness checklist

Before deploying AI in production, we recommend asking these 7 questions:

- Can we replay any failed AI journey end-to-end?

- Do we know which model was used for each decision?

- Can we trace every tool call (CRM, payments, calendar, search)?

- Is brand tone consistency monitored across channels?

- Are AI-to-human handoffs visible and auditable?

- Do we have real-time alerts for instruction drift or hallucinations?

- Can we correlate AI behavior with CSAT, conversion, and cost?

If you answered "no" to any of these, you're not production-ready.

FAQs

1. How should enterprises choose AI observability tools?

Prioritize compliance (SOC2, audit trails), scale (billions of traces), hybrid coverage (ML + LLMs + agents), and ecosystem fit.

2. Pricing models for popular AI observability services?

- Usage-based: Per trace/prediction/token (Phoenix, LangSmith)

- Host/entity-based: Per infra unit (Datadog, New Relic)

- Seats + usage: Per user + data volume

- Enterprise: Custom contracts with caps

3. AI observability platforms for enterprise?

Cloudflare AI Gateway (prompt observability), Arize Phoenix (drift), LangSmith (LLM debugging).

Building a culture around observability

We drive our strongest results by combining deep technical skill with radical transparency and async collaboration. Making cross-timezone PRs and open context-sharing daily habits has allowed us to accelerate shipping, boost team agility, and that momentum only holds when observability is embedded as a core product capability.

At Invent, we share insights from building AI-powered customer engagement platforms that operate reliably across WhatsApp, web, SMS, and email. Explore more at useinvent.com.